correlation <- 0.5

alpha <- 0.05

trials <- 1000

results <- tibble(n = seq(10, 100, by = 5)) |>

mutate(

sims = map(n, \(n) {

tibble(trial = 1:trials) |>

mutate(

data = map(trial, \(t) {

MASS::mvrnorm(n, mu = c(0, 0),

Sigma = matrix(c(1, correlation, correlation, 1), 2, 2)) |>

as_tibble(.name_repair = ~c("happiness", "optimism"))

}),

p_value = map_dbl(data, \(d) cor.test(d$happiness, d$optimism)$p.value)

)

}),

achieved_power = map_dbl(sims, \(s) mean(s$p_value < alpha))

) |>

select(n, achieved_power)Monte Carlo Simulation

EPH 752 Advanced Research Methods

Can a computer solve probability?

The Big Question

What if, instead of deriving complex mathematical formulas, we could just…

- Try it thousands of times

- Count how often something happens

- Estimate the probability from the results

This is the fundamental idea behind Monte Carlo simulation.

The Core Insight

If you flip a coin 10 times, you might get 6 heads. That’s not 50/50.

But if you flip it 1,000 times, the ratio will settle close to 50/50.

The more you try, the closer you get to the truth.

The Simplest Case: A Coin Flip

When you flip a coin 10 times, you might get 6 heads and 4 tails — obviously not 50/50.

But if you keep flipping:

- At first the ratio jumps all over the place

- After many flips, it slowly settles down

- After 100 flips: maybe 51 heads, 49 tails

The Law of Large Numbers

As the number of random trials increases, the average result will converge to the expected value.

First proven by Jacob Bernoulli in 1713, a foundational idea in probability for over 200 years.

But Bernoulli only proved this works for independent events. What about the real world, where almost everything depends on something else?

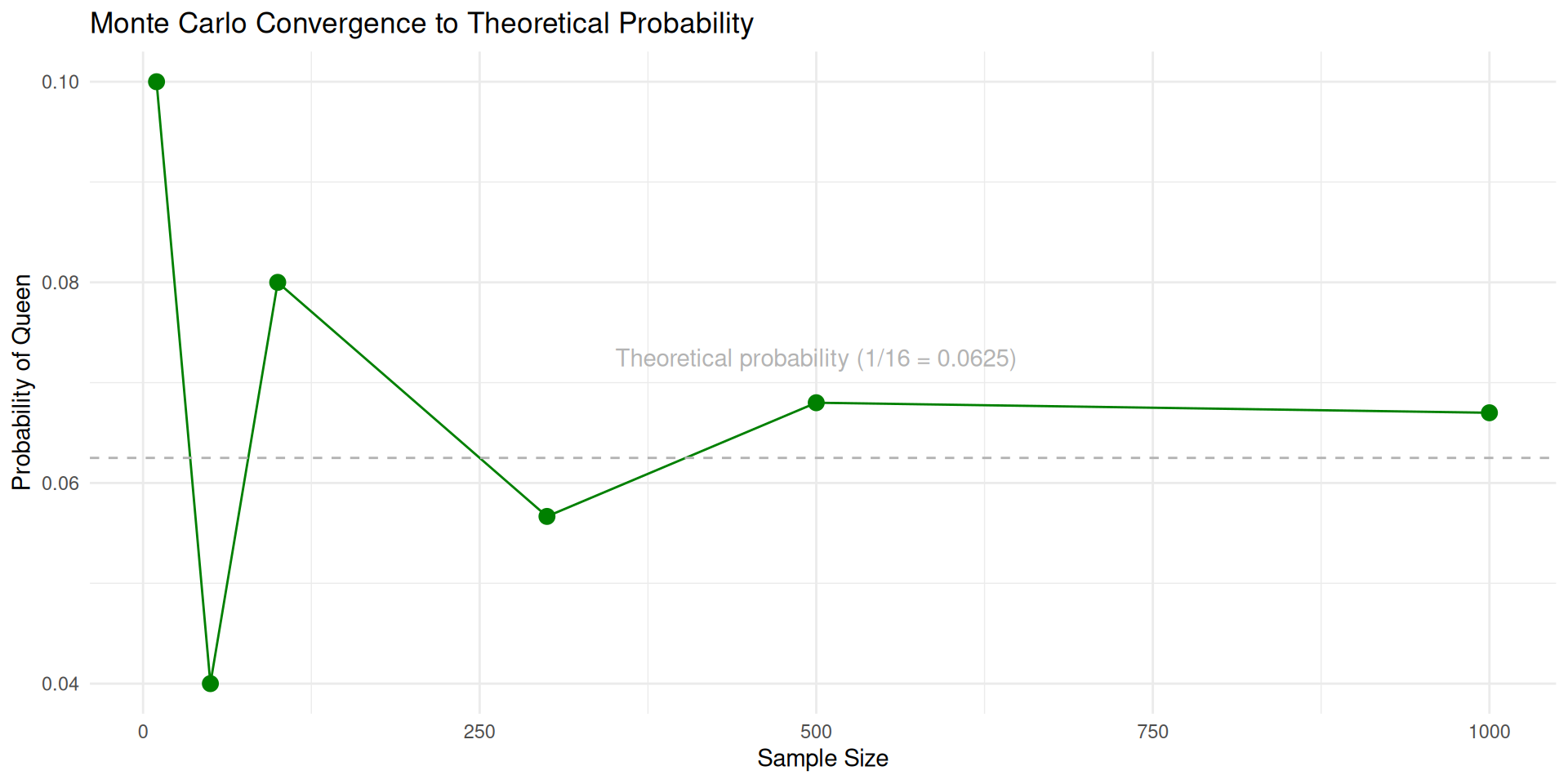

Let’s Try It with a Chess Set

What is the probability of picking the Queen?

Mathematical Solution

\[P(\text{Queen}) = \frac{\text{Number of Queens}}{\text{Total Chess Pieces}} = \frac{1}{16} = 0.0625\]

This is easy, we can solve it with a formula. But what if the problem were harder?

Simulation Approach

Instead of calculating, we just:

- Randomly pick a piece from the bag

- Write down what we got

- Put it back and repeat, many times

- Count how often we got the Queen

As samples increase, our estimate approaches 1/16 = 0.0625.

Let’s Try: 10 Samples

First, how many samples do we want to try?

Now we can simulate the process:

Results with 10 Samples

Let’s Try: 100 Samples

Let’s Try: 500 Samples

Watching Convergence

More samples → closer to the true probability. That’s the Law of Large Numbers in action.

As sample size increases, Monte Carlo estimates converge to theoretical probabilities (Law of Large Numbers). The computer does the hard work for us.

Now Make It Harder: Two Bishops

Mathematical Solution

\[P(\text{Both Bishops}) = \frac{\binom{2}{2}}{\binom{16}{2}} = \frac{1}{120} \approx 0.0083\]

Where:

\(\binom{2}{2}\) = Ways to choose 2 bishops from 2 bishops = 1

\(\binom{16}{2}\) = Ways to choose 2 pieces from 16 pieces = 120

Simulation Approach

We repeatedly sample 2 pieces from the chess set and count how often we get both bishops.

No formula needed, just sampling and counting.

Even Harder: KING Between Two PAWNs

\[P(\text{PAWN, KING, PAWN}) = \frac{\binom{8}{2} \times \binom{1}{1}}{\binom{16}{3}} \times \frac{1}{3!} = \frac{28 \times 1}{560} \times \frac{1}{6} \approx 0.0083\]

Where:

- \(\binom{8}{2}\) = Ways to choose 2 pawns from 8 pawns = 28

- \(\binom{1}{1}\) = Ways to choose 1 king from 1 king = 1

- \(\binom{16}{3}\) = Ways to choose 3 pieces from 16 pieces = 560

- \(\frac{1}{3!}\) = Probability of specific order (PAWN, KING, PAWN)

We repeatedly sample 3 pieces and check if they match the pattern:

- First piece is PAWN

- Second piece is KING

- Third piece is PAWN

No need to derive the formula, just simulate and count!

Simulating the KING Pattern

Results: KING Between Two PAWNs

The Pattern So Far

With Formulas:

- Exact answers

- Require complete mathematical specification

- Get very complex very fast

- Sometimes impossible for realistic problems

With Monte Carlo:

- Approximate answers

- Just simulate and count

- Handle any level of complexity

- Accuracy increases with more samples

Important

As sample size increases, Monte Carlo estimates converge to theoretical probabilities (Law of Large Numbers). The computer does the hard work for us.

A Math Dispute That Changed Everything

Russia, 1905

The Two Sides

Pavel Nekrasov, The Tsar of Probability

- Religious, powerful, defended the status quo

- Argued math could explain free will

Andrey Markov, Andrey the Furious

- Atheist, rigorous, had no patience for sloppy reasoning

- Called Nekrasov’s work “an abuse of mathematics”

The Argument: Independence and Free Will

Their dispute centered on the Law of Large Numbers, the same idea we just used with chess pieces.

Nekrasov’s reasoning: 1. Bernoulli proved the Law of Large Numbers works for independent events

2. Social statistics (marriage rates, crime rates) follow the Law of Large Numbers

3. Therefore, the decisions behind them must be independent 4. Independent decisions = free will

“Free will is not just philosophical, it’s measurable!”

Markov’s response:

Just because we see convergence doesn’t mean the events are independent.

He set out to prove that dependent events could also follow the Law of Large Numbers.

He needed an example where one event clearly depends on what came before…

Markov’s Counterattack: Poetry as Data

Markov turned to Eugene Onegin by Alexander Pushkin, a masterpiece of Russian literature.

He took the first 20,000 letters, stripped out punctuation and spaces, and analyzed them:

- 43% vowels, 57% consonants

- If letters were independent, vowel-vowel pairs should appear 18% of the time (0.43 × 0.43)

- But he found vowel-vowel pairs only 6% of the time

The letters were clearly dependent, what comes next depends on what came before.

The Test

If Markov could show that these dependent letters still followed the Law of Large Numbers, he would shatter Nekrasov’s argument.

And that’s exactly what he did.

The First Markov Chain

Markov built a prediction machine with just two states:

- Start at a vowel or consonant

- Look up the transition probability to the next state

- Generate a random number to decide what happens

- Repeat

After many steps, the ratio converged to 43% vowels and 57% consonants, exactly what he counted by hand.

Dependent events following the Law of Large Numbers.

Markov Chains

A sequence of events where the probability of each event depends only on the current state.

Try it: Markov Chain Visualization

“Free Will Is Not Necessary to Do Probability”

In fact, independence isn’t even necessary. With Markov chains, he found a way to do probability with dependent events.

This should have been a huge breakthrough, because in the real world, almost everything depends on something else:

- Weather tomorrow depends on conditions today

- How a disease spreads depends on who is infected now

- The behavior of particles depends on particles around them

But Markov himself didn’t seem to care much about applications.

“I am concerned only with questions of pure analysis. I refer to the question of applicability with indifference.”

Little did he know what would come next.

From Solitaire to the Atomic Bomb

Enrico Fermi’s Innovation (1930s)

- Pioneered “statistical sampling” to model neutron diffusion

- Used simple probabilistic methods before modern computers

- Created the foundation for particle physics simulations

Ulam’s Solitaire Moment (1946)

In January 1946, mathematician Stanislaw Ulam was struck by severe encephalitis. During his long recovery, he played countless games of Solitaire.

One question kept nagging him: What are the chances that a randomly-shuffled game can be won?

With 52 cards, there are 52! possible arrangements, about 8 × 1067. Solving this analytically was hopeless.

Then Ulam had a flash of insight: What if I just play hundreds of games and count how many can be won?

The Monte Carlo Insight

This is exactly what we did with chess pieces!

Instead of deriving a formula, just:

- Generate random scenarios

- Check the outcome

- Repeat many times

- Count the results

The key difference: now he wanted to apply this to nuclear physics.

The Manhattan Project Connection

Back at Los Alamos, scientists were trying to figure out how neutrons behave inside a nuclear core.

A neutron can:

- Scatter off an atom and keep traveling

- Get absorbed or leave the system

- Cause fission, splitting a uranium atom and releasing two or three more neutrons

Each step depends on the previous one, it’s a Markov chain.

Ulam shared his Solitaire idea with John von Neumann, who immediately saw its power: simulate the chain on a computer.

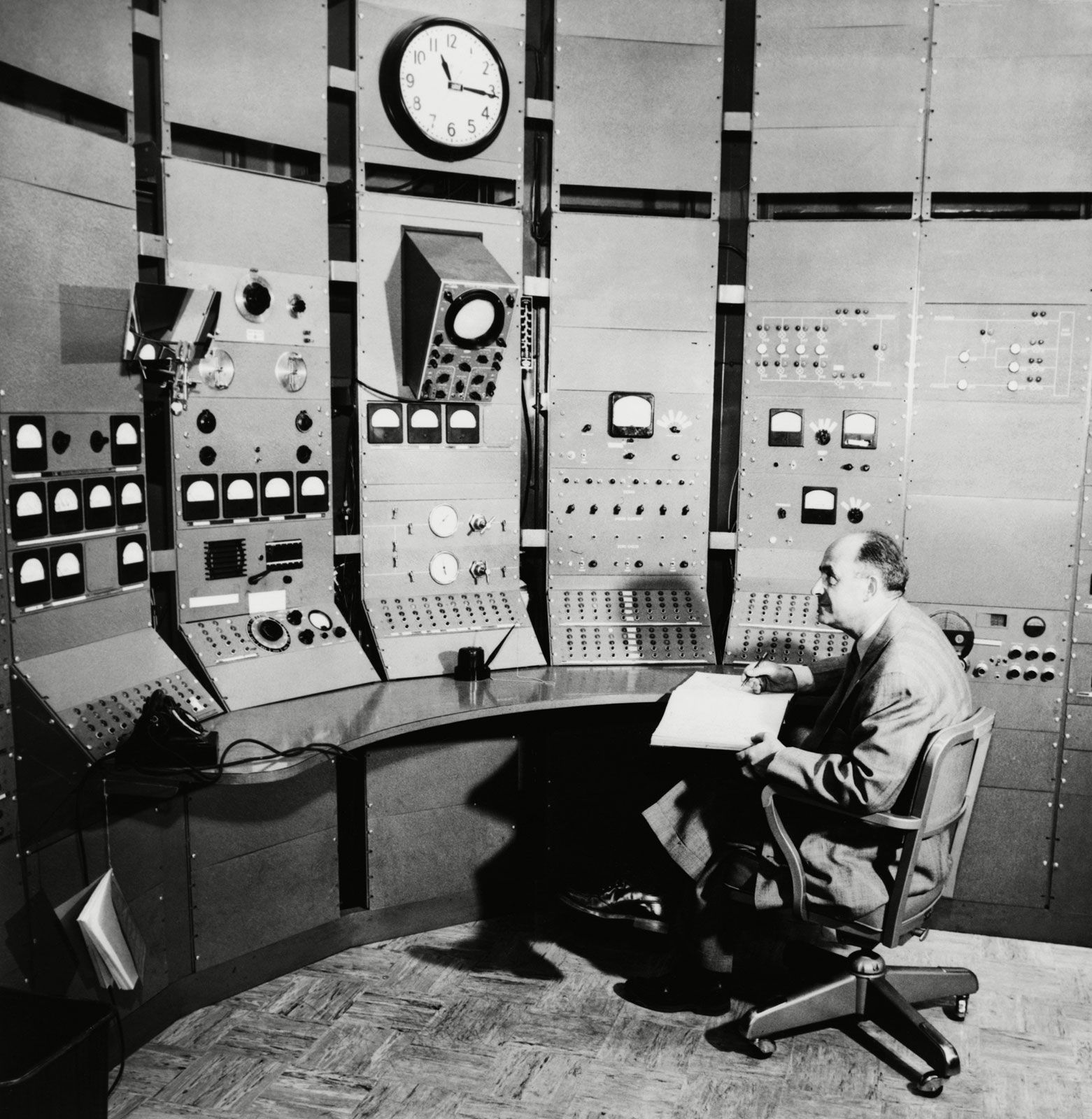

The Birth of Monte Carlo

Von Neumann and Ulam ran their neutron simulations on the ENIAC, one of the world’s first electronic computers.

They tracked the multiplication factor k:

- k < 1: reaction dies down

- k = 1: self-sustaining chain reaction

- k > 1: exponential growth — a bomb

The method needed a name. Ulam’s uncle was a gambler, and the random sampling reminded him of the Monte Carlo Casino in Monaco.

The Monte Carlo method was born.

The MANIAC Computer (1953)

Mathematical Analyzer, Numerical Integrator, And Computer

- 70,000 multiplications per second (modern computers: billions)

- Arianna Rosenbluth’s contribution often overlooked

Key Milestones After the Bomb

1955, RAND Corporation publishes A Million Random Digits, the first resource for random number generation

1970, W. Keith Hastings generalizes the Metropolis algorithm, enabling Bayesian applications

“A lot of statisticians were not oriented toward computing. They take these theoretical courses, crank out theoretical papers, and some of them want an exact answer.*

1991, Johnson & Gastwirth use Bayesian Monte Carlo to show why universal HIV screening would be counterproductive, even highly sensitive tests generate many false positives for rare diseases

Today, Hamiltonian Monte Carlo powers modern Bayesian software like Stan and PyMC, making complex statistical inference accessible to researchers everywhere

What Is Monte Carlo Simulation?

Putting It All Together

Monte Carlo simulation is a computational method that:

- Uses repeated random sampling to obtain numerical results

- Approximates solutions to problems too complex for formulas

- Generates countless “what-if” scenarios based on probability distributions

- Works by leveraging the Law of Large Numbers, more trials → better estimates

The Three Steps

Generate random inputs from probability distributions

Process those inputs through your model

Aggregate statistics from many iterations

Einstein Was Wrong (Sort of)

“Insanity is doing the same thing over and over and expecting different results.” Albert Einstein

But in Monte Carlo simulations, we do the same thing over and over and get different results, that’s the whole point!

- Each simulation run produces different random outcomes

- The collective results reveal the underlying probability distribution

- The more simulations we run, the closer we get to the truth

This is not insanity, it’s science.

Building More Complex Simulations

From Chess Pieces to Data Generation

So far we’ve sampled from a bag of chess pieces. But we can simulate anything:

- Continuous variables (heights, test scores, income)

- Binary outcomes (treatment/control, yes/no)

- Relationships between variables

- Entire regression models

Let’s build up, step by step.

Simulating a Continuous Variable

Visualizing the Distribution

Simulating a Binary Variable

Visualizing the Binary Variable

Creating a Relationship

Now let’s create a variable z that depends on both x and y:

\[z = 1.5 + 0.8 \times y + x\]

We know the true effect of y on z is 0.8. Can we recover it?

Recovering the True Effect

With all 300 observations, we should get close to 0.8.

But What Happens with Smaller Samples?

Each Sample Gives a Different Answer

Different samples → different estimates. This is sampling variability, and Monte Carlo helps us understand it.

Let’s Do This 60 Times

Run a Regression on Each Sample

Extract the Coefficients

The Sampling Distribution of Effects

Visualizing the Sampling Distribution

The dashed green line is our average estimate. The dotted red line is the true effect (0.8).

How Often Did We Underestimate?

How Often Did We Find No Effect or a Negative Effect?

This is the essence of statistical power: how often will our study detect an effect that truly exists?

Real-World Applications of Monte Carlo

Research Design Applications

Monte Carlo simulations help researchers make informed decisions about:

- Required sample sizes

- Minimum detectable effects

- Statistical power

- Impact of missing data

- Measurement reliability

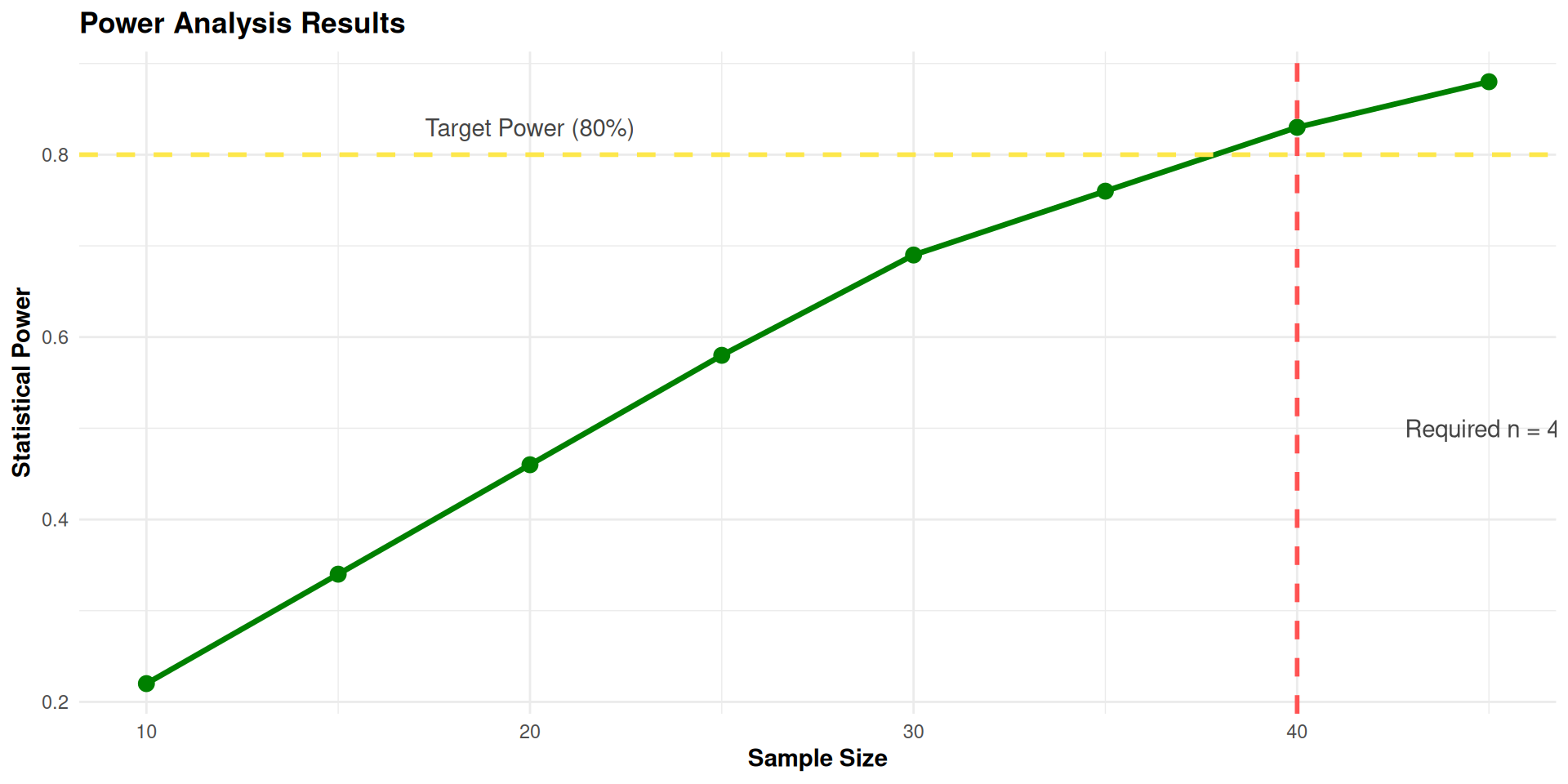

Application 1: Sample Size Determination

Question:

What sample size do we need to detect a correlation of 0.5 between happiness and optimism?

Fixed Parameters:

- Target correlation: 0.5

- Desired power: 0.8 (80%)

- Alpha level: 0.05

- Factor loading: 0.8 (reliability of measures)

- Missing data: none

Monte Carlo Approach

- Simulate data with the target correlation

- Run tests with increasing sample sizes

- Calculate power at each sample size

- Find minimum sample size with 80% power

Conclusion: To detect a correlation of 0.5 with 80% power:

- Minimum required sample size: 40 participants- With this sample size, we have an 83% chance of detecting the effect if it exists

- Reliability of our measures (0.8) helps reduce the needed sample size

- Without Monte Carlo, we might have overestimated the required sample size

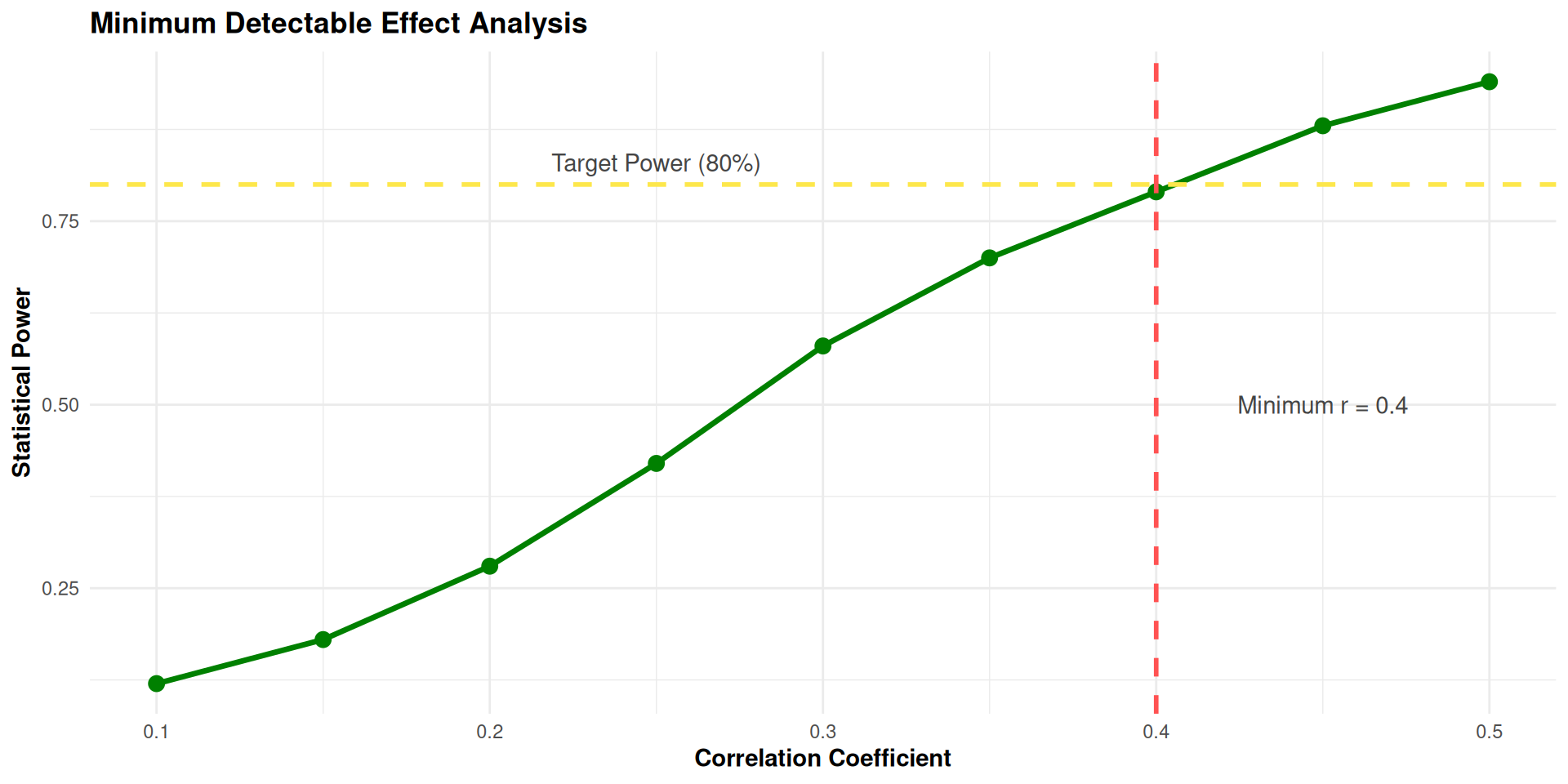

Application 2: Minimum Detectable Effect

Question:

What’s the smallest correlation between happiness and optimism we can reliably detect with our sample?

Fixed Parameters:

- Sample size: 40 participants (fixed by budget constraints)

- Desired power: 0.8 (80%)

- Alpha level: 0.05

- Factor loading: 0.7 (reliability of measures)

- Missing data: 5% (expected attrition)

Monte Carlo Approach

- Simulate data with various correlations

- Account for 5% missing data in simulations

- Adjust for measurement reliability (0.7)

- Find minimum correlation with 80% power

alpha <- 0.05

trials <- 1000

effective_n <- floor(40 * (1 - 0.05))

factor_loading <- 0.7

results <- tibble(correlation = seq(0.1, 0.9, by = 0.05)) |>

mutate(

adjusted_r = correlation * factor_loading^2,

sims = map(adjusted_r, \(r) {

tibble(trial = 1:trials) |>

mutate(

data = map(trial, \(t) {

MASS::mvrnorm(effective_n, mu = c(0, 0),

Sigma = matrix(c(1, r, r, 1), 2, 2)) |>

as_tibble(.name_repair = ~c("happiness", "optimism"))

}),

p_value = map_dbl(data, \(d) cor.test(d$happiness, d$optimism)$p.value)

)

}),

achieved_power = map_dbl(sims, \(s) mean(s$p_value < alpha))

) |>

select(correlation, achieved_power)

Conclusion: With 40 participants and 5% missing data:

- We can reliably detect correlations of r = 0.4 or larger- Smaller correlations (r < 0.4) will be harder to detect

- The 5% missing data reduces our effective sample size

- Measurement reliability of 0.7 further attenuates the detectable effect

- Researchers should focus on effects of at least medium size

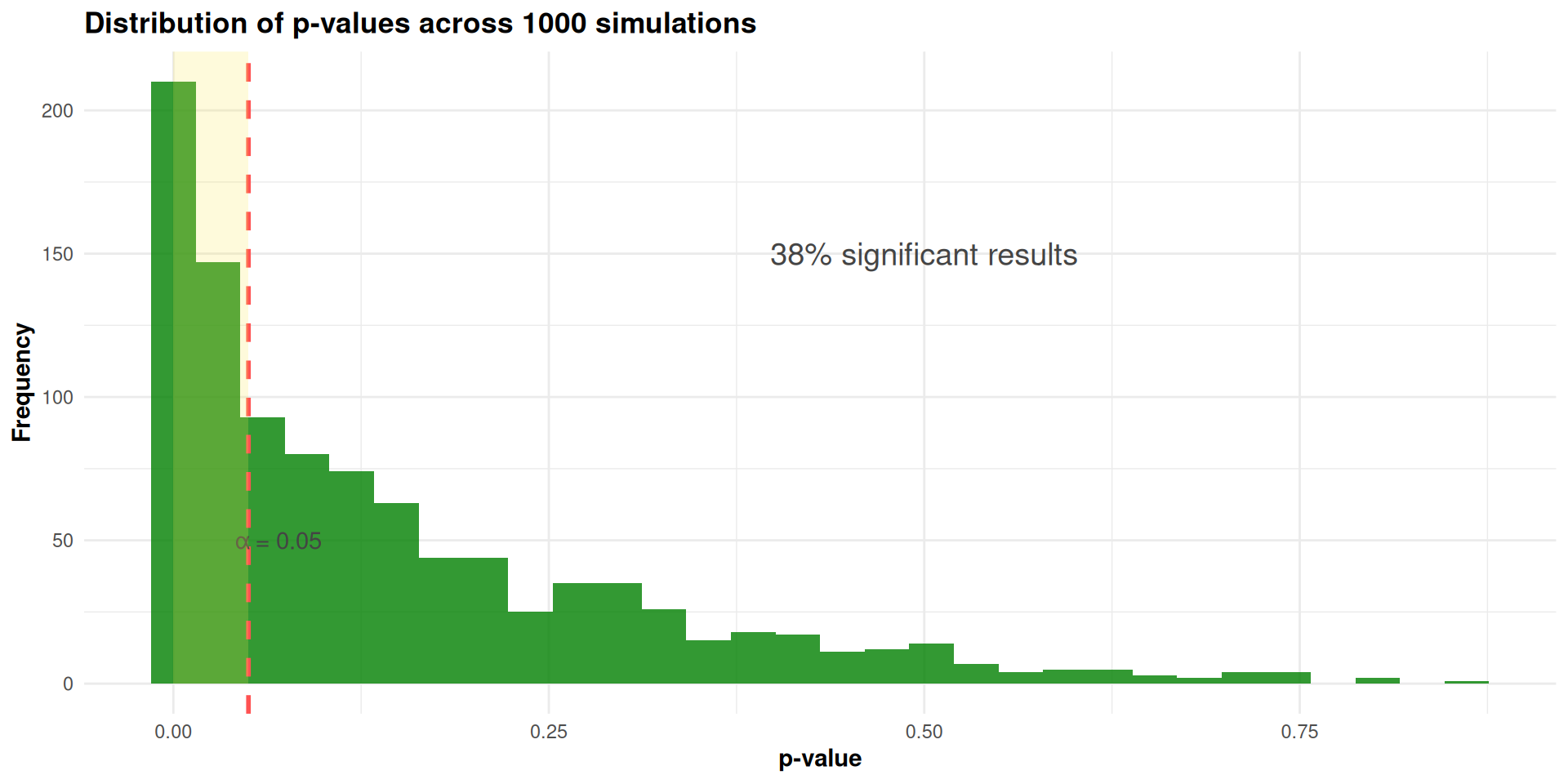

Application 3: Power Analysis

Question:

What is our statistical power to detect a correlation of 0.3 between happiness and optimism?

Fixed Parameters:

- Sample size: 60 participants (already collected)

- Alpha level: 0.05

- Target correlation: 0.3 (small-to-medium effect)

- Factor loading: 0.62 (measured reliability)

- Missing data: 10% (actual attrition)

Monte Carlo Approach

- Simulate 1000+ datasets with our parameters

- Apply 10% missing data pattern

- Adjust for measurement reliability (0.62)

- Calculate percentage of significant results

alpha <- 0.05

trials <- 1000

effective_n <- floor(60 * (1 - 0.10))

adjusted_r <- 0.3 * 0.62^2

results <- tibble(trial = 1:trials) |>

mutate(

data = map(trial, \(t) {

MASS::mvrnorm(effective_n, mu = c(0, 0),

Sigma = matrix(c(1, adjusted_r, adjusted_r, 1), 2, 2)) |>

as_tibble(.name_repair = ~c("happiness", "optimism"))

}),

p_value = map_dbl(data, \(d) cor.test(d$happiness, d$optimism)$p.value)

)

achieved_power <- mean(results$p_value < alpha)

Conclusion: For our study with 60 participants:

- Statistical power is approximately 51% to detect r = 0.3

- This is below the conventional 80% power threshold

- The 10% missing data significantly reduced our power

- Measurement reliability (0.62) further attenuated the effect

- Recommendations:

- Use more reliable measures in future studies

- Implement strategies to reduce missing data

- Consider focusing on larger effect sizes

Monte Carlo in Mplus

The Model

We want to estimate power for a structural equation model:

- A latent factor F measured by 4 continuous items (x1–x4)

- F predicts an observed outcome y

Population Parameters:

- Factor loadings: .7, 0.8, 0.7, 0.9

- Regression of y on F: 0.5

- Factor variance: 1.0

- Residual variances: 1 - loadings^2 for all items and y

The Question

Question:

With a sample of n = 40, do we have enough power to detect:

- The regression effect of

Fony(0.3)?

Answer:

Mplus can answer this using its built-in MONTECARLO: command, no external dataset needed.

The Mplus Monte Carlo Syntax

TITLE:

Monte Carlo Power Analysis:

Latent Factor (4 indicators) predicting an observed outcome

MONTECARLO:

NAMES = x1-x4 y;

NOBSERVATIONS = 40;

NREPS = 1000;

SEED = 2026;

MODEL POPULATION:

f BY x1*.7 x2*.8 x3*.7 x4*.9;

f@1;

y ON f*.3;

x1*.51;

x2*.36;

x3*.51;

x4*.19;

y*.6;

MODEL:

f BY x1* x2 x3 x4;

f@1;

y ON f*;

OUTPUT: TECH9;MONTECARLO:

NAMES = x1-x4 y;— the variables in the simulated datasetsNOBSERVATIONS = 40;— sample size per replicationNREPS = 1000;— number of replications (more = more precise power estimate)SEED = 2026;— for reproducibility

MODEL POPULATION:

The true data-generating process. Values after * are the population parameters:

f BY x1*.7 x2*.8 x3*.7 x4*.9;factor loadingsf@1;factor variance fixed at 1y ON f*.3;regression coefficientx1*.51; x2*.36; x3*.51; x4*.19;residual variances (1 minus loading squared)y*.6;residual variance of the outcome

MODEL:

The analysis model fitted to each simulated dataset. Values after * are starting values.

f BY x1* x2 x3 x4;freely estimate all loadingsf@1;fix factor variance at 1y ON f*;freely estimate the regression path

Reading the Mplus Output

After running, Mplus produces a table like this:

ESTIMATES S. E. M. S. E. 95% % Sig

Population Average Std. Dev. Average Cover Coeff

F BY

X1 1.000 0.6865 0.1438 0.1411 0.1189 0.401 0.999

X2 1.000 0.7856 0.1371 0.1356 0.0648 0.644 0.999

X3 1.000 0.6823 0.1480 0.1414 0.1228 0.402 0.996

X4 1.000 0.8835 0.1311 0.1301 0.0308 0.823 1.000

Y ON

F 0.000 0.2949 0.1328 0.1313 0.1046 0.379 0.621

Residual Variances

X1 0.500 0.4733 0.1308 0.1235 0.0178 0.884 0.998

X2 0.500 0.3376 0.1106 0.1049 0.0386 0.574 0.966

X3 0.500 0.4798 0.1319 0.1246 0.0178 0.899 0.999

X4 0.500 0.1801 0.1014 0.0970 0.1126 0.134 0.522

Y 0.500 0.5697 0.1331 0.1304 0.0226 0.951 1.000- Population the true parameter value you specified

- Average mean of estimates across 1000 replications (should be close to Population if unbiased)

- Std. Dev. standard deviation of estimates across replications (empirical SE)

- S.E. Average mean of the model-based standard errors (should be close to Std. Dev.)

- M.S.E. mean squared error (combines bias and variance)

- 95% Cover proportion of replications where the 95% CI contains the true value (should be ~0.95)

- % Sig Coeff proportion of replications where the parameter is significant (p < .05). This is the power estimate!

With n = 40:

- Regression

yONF: % Sig ≈ 0.621 (below the 0.80 threshold), and coverage is only 0.379

Conclusion: n = 40 is insufficient to detect the regression effect. We need a larger sample or ?.

The Monte Carlo Workflow in Mplus

- Specify the population model with the effect sizes you expect

- Choose a starting sample size

- Run the Monte Carlo simulation

- Check the power column for your key parameters

- If power < 0.80, increase NOBSERVATIONS

- If power > 0.95, consider whether a smaller n would suffice

- Repeat until you find the minimum n that gives adequate power

Tips

- Always set NREPS to at least 1000 for stable power estimates

- Use SEED for reproducibility

- Check the Cover column — values far from 0.95 suggest model problems

- Check that Average ≈ Population — large deviations indicate bias

- The Mplus

.inpfile for this example is available in themontecarlo/folder:power_sem.inp

Wrapping Up

Monte Carlo for Research Planning: Summary

Key Benefits:

-

Realistic planning: Accounts for real-world complexities like:

- Missing data

- Measurement error

- Complex statistical models

- Flexibility: Can simulate any research design and analysis

- Transparency: Makes assumptions explicit and testable

- Cost-effective: Optimizes resource allocation before data collection

- Reduced risk: Identifies potential issues before they happen

Practical Workflow:

- Define research questions and hypotheses

- Specify expected parameters and constraints

- Program Monte Carlo simulations

- Run simulations for various scenarios

- Visualize and interpret results

- Make informed research design decisions

- Document simulation-based decisions

The Monte Carlo approach transforms research planning from an educated guess into a data-driven decision process

The Bigger Picture

What started as a math dispute in 1905 Russia became one of the most important computational methods ever invented:

- Markov showed you can do probability with dependent events

- Ulam & von Neumann turned it into a tool for solving impossible problems

- Today it powers everything from drug trials to climate models to AI

Further Watching

The historical narrative in this lecture draws from the Veritasium video “The Surprisingly Satisfying Math Behind Markov Chains” — highly recommended for a deeper dive into the story.

Try the Markomposition tool to see Markov chains generate text!

“It is still an unending source of surprise for me to see how a few scribbles on a blackboard could change the course of human affairs.” — Stanislaw Ulam

EPH 752 Advanced Research Methods